Systems View

Operations Research/Analytics Consulting

1/23/2022 - 3:00 p..m.

Daniel Kahneman, Olivier Sibony, Cass Sunstein. 2021. Noise: A Flaw in Human Judgement, Hachette Book Group, New York.

The focus of this book is decision making where a human judgement plays a large role; for example, medical diagnosis, criminal sentencing, hiring, and insurance underwriting. The authors show how these types of decisions often suffer from considerable bias and noise (of various described subtypes) that cause widely disparate outcomes for seemingly similar situations. This can be very costly (e.g., a patient gets an incorrect diagnosis, insurance is underpriced, or the wrong person gets hired) and/or unethical (e.g., criminal sentences are unduly harsh or lenient). People who make these judgements tend to overestimate their predictive and judgmental prowess, a phenomenon well known to O.R. practitioners. Many examples are provided where algorithms, or even simple formulas or scoring rules are superior to expert judgement in producing a relatively noise free outcome. Aggregated independent judgements are superior to single judgements. Relative judgements (ranking or pairwise comparisons) are less noisy than absolute ones. To further mitigate noise and bias, the authors describe how to conduct “noise audits” and provide bias observation checklists.

John Kay, Mervyn King. 2020. Radical Uncertainty: Decision-Making Beyond the Numbers. Norton, New York.

This book shows how probability-based, utility maximizing decision models are usually inappropriate in scenarios with large stakes, high uncertainty, and limited or no relevant prior experience to inform decision makers. Oversimplified models, while intellectually satisfying, may fail to capture real world dynamics. Yet such models are still used to confidently evaluate them. Rare “black swan” events are often assumed away or cannot even be conceived (“unknown unknowns”). Think of pandemics (!), economic collapses, bridge failures, or breakthrough technology products. We cannot count on subjective probabilities to rescue us, even when tendered by “experts”, or even when averaged over multiple sources. Moreover, outcomes (tree branches) can multiply more than the largest white board could possibly illustrate. In seemingly direct contrast to Kahneman et al., the authors suggest that greater reliance on expert human judgement is the answer! But there is not really a contradiction. Kahneman et al., are looking at situations where similar, but not identical decisions are made repeatedly over time. Thus, historical data is available to analyze. Kay and King are referring to decision situations that are, in the main, unique. They suggest that decision makers first gather the available information to determine ‘what is going on here’. Rather than assess subjective probabilities, they should determine the overall robustness of outcomes to courses of action. A ‘reference narrative’ should be established and evaluated for its resilience to possible risks. With the right amount of expert judgement, tempered by humility, radical uncertainty is to be embraced.

I think all O.R. practitioners would benefit from absorbing the lessons from this pair of books and applying them to decision-making situations we may encounter.

7/8/2020 - 5:00 p..m.

In an earlier posting below, I discussed the importance of good predictors in machine learning. A corollary to this is that leaving out important confounding predictors can lead to bad predictions and erroneous conclusions regarding causal relationships. This is a type of model specification error. The problem is more common when using linear regression models versus the non-parametric algorithms typically used in machine learning. As an example, I was recently involved in a study examining the causal effects of historical air pollution levels (i.e. PM2.5 particulates) and COVID-19 death rates. A recent unpublished, yet widely reported study, based on U.S. county level data and regression models, had suggested a statistically significant and causal relationship between the two, but we were skeptical. We were able to build a dataset similar to those in the original study; to include demographic, climate, economic, and population data; but also included some additional readily computed variables. We found that the simple addition of latitude and log-population density covariates could reverse the conclusions of the study! We published a paper in Global Epidemiology describing our findings. Speaking of predictions, I am sure that we will soon encounter studies that “prove” that the COVID-19 pandemic is worsened by if not caused by climate change!

3/1/2020 - 5:00 p..m.

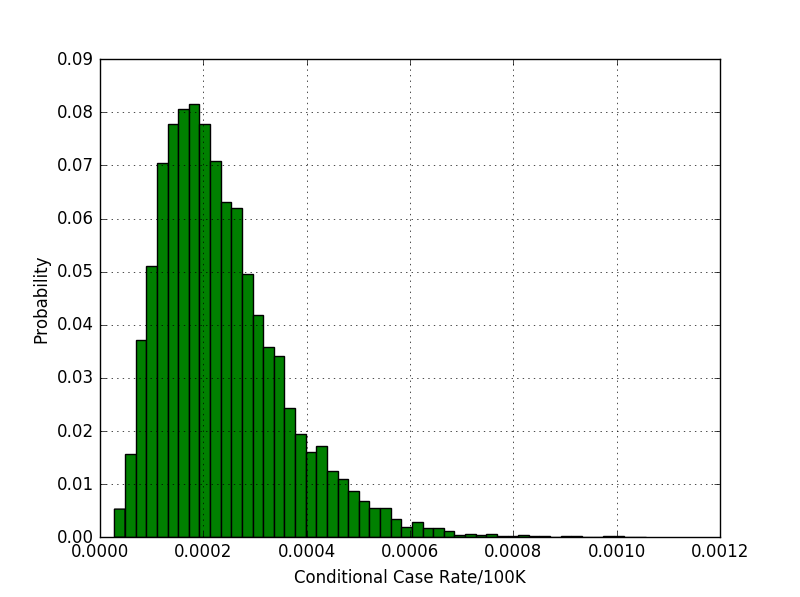

I have a publication coming out soon in the journal, Risk Analysis, entitled: Quantifying Human Health Risks from Virginiamycin Use in Food Animals in China. Like many papers that Dr. Tony Cox and I have collaborated on, the underlying model is a probabilistic risk simulation, the output of which is shown in the figure to the left. The cases represent additional people infected with QD (Quinupristin-Dalfopristin) resistant VREF (vancomycin-resistant Enterococcus faecium) infections expected from widespread use of the antibiotic feed additive, virginiamycin, on chickens in China. We found an extremely low risk, equating to 1 additional case every few decades in the worst case.

The study provided another example of the important role that can be played by probabilistic, causal risk analysis in complex decision making.

1/10/2020 - 11:00 a..m.

In my experience, developing good predictors (“features”) in your input data has a much greater impact on solution quality than excessively tuning algorithm parameters or finding the absolute best predictive algorithm among good alternatives. I first learned this when competing in Kaggle competitions, where the quantitative effect of such an approach could be immediately seen on the leader board. You would often see competitors who focused on algorithm-tweaking only move incrementally upward over time, with marginal improvements in their scores. But the addition of a single good predictor variable could result in leapfrogging to the top tier. My recent experience in the health care industry reinforced this perception, but also highlighted another aspect. That is, data development can consume far more time and resources than algorithm development. In our case, we were working with medical claims data to make predictions about future utilization and costs, a daunting task, especially at the individual level. Identifying a category of medical event often involved complex logic over diagnostic codes, procedure codes, facility codes, revenue codes, date sequences, and more. Throw in the fact that the logic sometimes needed to efficiently operate over millions or even billions of medical claim records. The result of all that effort might be a single column of reasonably reliable binary indicators (note that production runs would typically involve computing many such predictors simultaneously). In other applications, the choice of feature is not at all clear cut. For example, a single feature might be needed to represent a specific chronological sequence of events, or geographical juxtaposition of interacting elements. Identifying these from raw data can be very computationally intensive. Recent developments, such as scalable graph data structures and algorithms (e.g. https://www.oreilly.com/library/view/graph-algorithms/9781492047674/) , can be useful here. Given the ubiquity of often free high-quality machine learning software libraries (e.g. https://scikit-learn.org/stable/index.html), application development should always put the greatest focus on the data.

12/10/2019 - 7:23p.m.

I recently reviewed the functionality of a python software module called GeoPandas (http://geopandas.org/). In the past, Systems View has developed complex routing software in C#. The application required writing low level code for tasks such as: converting between different map coordinate reference systems, computing distances, and determining attributes of polygons. GeoPandas performs these and other complex mapping tasks under the hood - a few lines of code does what previously required lengthy mathematical, geometric, and trigonometric subroutines in C#. Geopandas also can read directly from geographic shape files, which are a standard for GIS. I see how all of this functionality could be leveraged for an upcoming client engagement, where it would be useful to determine the intersections between geographic subunits (e.g. zip code areas as shape files) with known demographics, and superimposed “effect” regions. In this way, demographically driven effects could be predicted for the region, perhaps in conjunction with machine learning techniques. For those interested, Kaggle has a great free introductory course at https://www.kaggle.com/learn/geospatial-analysis.

11/1/2019 - 8:15p.m.

I started Systems View in 1997. In 2013, I was asked to consult for NextHealth Technologies, a Denver start-up that was looking to use advanced analytics to help health insurance companies better engage with their members to reduce costs and improve health outcomes. In 2014, I agreed to join NextHealth as an employee, and advanced to the position of SVP of Analytics before retiring in October 2019. I had some great experiences at NextHealth, including the design and/or development of many of the algorithms at the core of their product. I had the opportunity to learn a lot more about simulation-optimization, machine learning, bootstrap and Bayesian statistics, decision trees, and propensity score matching - not to mention learning about start-up company evolution, venture capital, stock options, boards, and all manner of corporate management processes.

During my time at NextHealth, I kept the lights on at Systems View with a few small legacy projects for some previous clients. Now that I have left full time external employment, I have the opportunity to apply my knowledge to some new endeavors. ( I am starting with this first cut at a redesigned web site!) Looking forward to this next phase.

10/30/2019 - 10:15a.m.

I was privileged to assist with the creation of an interesting book in collaboration with Dr. Tony Cox and Richard Sun. I have worked with Tony, of Cox Associates, for over 20 years, and this book describes several key projects we have worked on. These projects all involved health risk analysis, and the book describes in detail the methodologies we used. The emphasis of these studies was on causal analytics, versus the many studies out there that mistake correlation for causation. In fact, many of our efforts were motivated by a desire to (in)validate conventional wisdom in areas such as animal antibiotics and air pollution, where correlation has been misinterpreted. However, the book is much more than a compilation of studies. Tony does an excellent job of drawing higher level lessons and providing a how-to guide for risk analysts. The book definitely has a point of view. My favorite chapter title: “Improving risk management: from lame excuses to principled risk management.” Available from the publisher or Amazon